TL; DR:

An AI which is easy to use doesn’t equal ‚skills‘.

Governance doesn’t necessarly mean over-administrative and slow processes but brings clarity and freedom to act.

As a business owner you should take care the leaders of your portfolio companies are well trained in what matters and what risks to take care of.

And the leaders of that portfolio companies should not centralize all decisions around which AI and how to use. Centralization reduces velocity and at the same time forces employees to act self-responsible. Even if they have a high-agency attitude you’d teach them not to take responsibility and risk on their own but waiting on what any manager would decide. This causes frustration and slows down everything: motivation, speed of decision, innovation and even worse: creates poor results. Nothing you want at all.

How to overcome this:

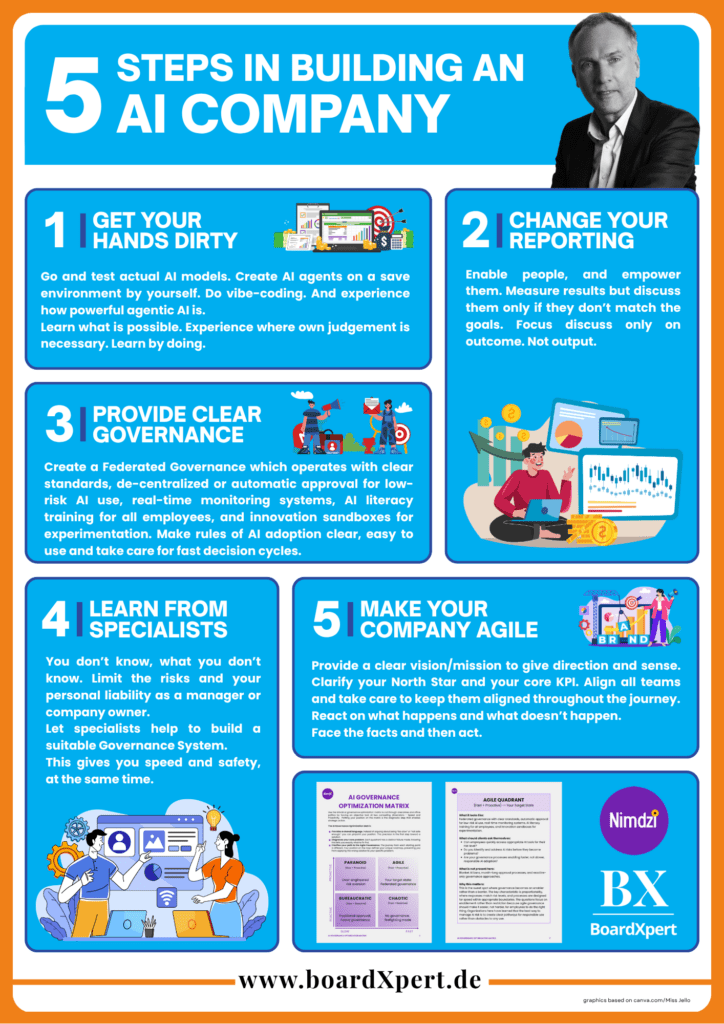

Create a so-called „federated governance“, which sets clear general rules, automatic approval for low-risk AI-use, real-time monitoring systems, AI literacy training for alkl employees, and innovation sandboxes for experimentation. This supports agility. Educate your employees so they are aware about the different categories of risk. Then set clear borderlines what ist o be decided de-centralized, and what not.

Regarding the right skills:Make your organization following truelyagile management priciples. They are the key. Hand over real responsibility, not just tasks. Doing so you also need to have set clear goals, milestones and align those different milestones between all departments.

A bit more in detail:

You can’t control, what you don’t measure. As simple as that. But the more you control, the more time it takes. At least if you do this in a manual, centralized way.

Centralizing typically also includes people have to wait on someone else, who then is by definition a bottleneck. This is not only time consuming but also very frustrating for those who sit and wait on a decision.

Developers love to say: „we don’t know, what we don’t know.“ Never heard? Just go to them and ask about a realistic date when a certain new softwareversion will be deployed and ready to rock the market.

The same is for the usage of AI: nobody really knows, what exactly each AI system is doing and or can predict which output will be received during usage. And even worse: we don’t have any idea what kind of data is really just being processed and not being used by the AI companies to train their models.

And how could we set clear rules about AI usage, allowance and necessary (Data) protection, if we don’t know how exactly this AI operates?

Well, to a certain extend we all need to rely on what the model vendors tell us about data usage and data privacy. And certainly we can (and need to) classify the content (the data) we’re handing over and/or using within those AI systems. Within the classification we also define the risk which is directly connected with the respective data.

For those who want or seek assistance in this process it’s strongly recommended to take advantage of the help of external specialists. A very positive example is the approach the team of Nimdzi experts is demonstrating within their purple.nimdzi.com program: the Nimdzi AI Governance Matrix. They developed a simple, yet very effective way to clearly demonstrate how your organisation should look like – and which measures are neccessary to get there: to the „Federated Governance Status“.

This status is headlined as „AGILE“ and is described like this:

Enabling: „AI adoption is a strategic priority and is proactively enabled. The organization provides self-service platforms, innovation sandboxes, and training to empower employees. Governance is a supportive function that accelerates responsible AI adoption rather than slowing it down.“

But also: Integrated & Proportional: „Risk management is an integrated part of the AI development lifecycle. The organization has realtime monitoring, automated alerts, and a culture of continuous improvement. Governance is seen as a strategic partner that ensures the organization can move quickly and responsibly.“

From theory to practise:

Federated governance operates with clear standards, de-centralized or automatic approval for low-risk AI use, real-time monitoring systems, AI literacy training for all employees, and innovation sandboxes for experimentation.

Key questions you should be able to positively answer are:

- Can employees quickly access appropriate AI tools for their risk level?

- Do you identify and address AI risks before they become problems?

- Are your governance processes enabling faster, not slower, but also responsible AI adoption?

Experience clearly shows that the best way to manage AI risk is to rather create transparent pathways for responsible use than obstacles to any use. Because: the more obstacles arise, the more complicated and time-consuming it is to get a clear support in when and how to use AI systems, the more people tend to find non-official ways to use AI systems, anyways. Just think about Bring-in-your-own-devices or the usage of private accounts for company purposes – a nightmare for every IT department (security) and data privacy officer.

Do you feel you should speed up in AI governance?

Building, structuring and running a company supported or even based on AI changes a lot: organizational, speed, knowledge availability, but also the way people collaborate and take responsibility. This immediately leads to the need of a different, or at least more nuanced skill level for managers and employees, as well.

The good news: there is nothing new in this. We don’t have to re-invent something, instead we just can copy-paste from what we already had with „agile organizations“.

The key difference today: AI is empowering ALL of the employees across the whole organization to take advantage of knowledge and KPI. If they don’t have original corporate data AI is the chance to bridge that by themselves. Even „assumptions“ now become very fast very close to reality. Depending on the quality of the ‚discussion‘ one is able to perform with the AI model in use...

AI is accelerating, is boosting, is super-fast. With the usage of agentic AI you need more and more the same set of skills you need to guide and collaborate with a team of humans.

In reality there is not much difference how to lead a team of humans (as a team leader, or manager) or how to lead a number of AI agents.

So agile management priciples are the key. Hand over real responsibility, not just tasks. And help by giving clear direction and guidelines.

Employees are ready for this change – but is your mangement team, as well?

The TOP management now – more than ever – needs to provide a clear vision/mission. This gives direction and provides sense. Why are we here, what are we doing and for which purpose? What is our major focus goal, what is our North Star?

Based on that the leadership team (2nd-, 3rd-line management, …) needs to define a suitable and between ALL departments aligned strategy („how to get there?“). And they need to define ONE central KPI (= how to measure the status of achievment of our North Star), so every single action can and should be questionned: does this help to fuel our key KPI?

The core responsibility of the leadership team is to continuously align results and actual predictions between all departments and judge whether the chosen strategy still fits the purpose and is the best way to go. They need to ask questions like

- „were and are our assumptions on xyz correct and does the way we do execute our strategy still lead to achievment of our vision?“

- „Are we fast enough?“

- „Which external factors/changes may are going to break our strategy on the short or on the mid to long run?“

- „Where do we need to adjust our strategy – and what would be the impact to each department?“

- „We seem to miss our original target in department X – what will be the impact to the possibilities of all other departments to achieve their targets?“

If not already done: change reportings to judging statements: where and how could I/my department increase speed, or ensure the planned result with a higher likeliness?

Discuss metrics only if something doesn’t bring the planned/expected value/result.

As a manager you need to actively ask:

- What does this leader, or the respective team need to still achieve their (intermediate) goals?

- Which impact to other teams is to be expected?

- What are the dependencies to other teams/departments?

- If there is anything which is not supporting the ‚north star‘, is not helping to achieve the vision: immediately stop it.

If all are aligned to one vision, one goal and every team consistantly measures success, dependencies, impact and aligns this with all other related departments this will skyrocket results, satisfaction and fun while working.

As an investor: could you imagine a company with growing results, well oiled processes, a high satisfaction level and a healthy working atmosphere which would not be a highly interesting target for any follow-up investors…?

Do you want your portfolio company to be(come) into such a status?